Use case 2 (Philips / Verum / TNO-ESI / Unit040)

Interventional application

Philips’ Azurion (see figure above) is an image guided therapy platform designed to provide image guidance during minimally invasive treatment for a wide range of procedures. Vivaldy Use Case 2 focuses on efficient testing and verification of Azurion’s Interventional Applications (IApps). IApps provide clinicians with additional tooling that assists with various procedure steps, such as planning, image acquisition, catheter navigation and reporting. The application used for this demo is SmartCT. SmartCT is a 3D visualization and 3D measuring tool that works with Azurion’s interventional X-ray system.

The SmartCT solution enriches the 3D interventional tools with clear guidance, designed to remove barriers to acquiring 3D images in the interventional lab. It simplifies 3D acquisition to empower all clinical users to easily perform 3D imaging, regardless of their experience. More to find here.

Before bringing an update or upgrade of the application to the market, it has to be tested to assess its functionality and to mitigate the risk of introducing defects elsewhere in connected devices or applications. The goal of use case 2 is to improve efficiency in testing, with automatic system level tests, impact analysis models, and a virtual test platform (an interventional X-ray system in which large hardware parts have been virtualized), all connected to a continuous Integration and (internal) deployment pipeline.

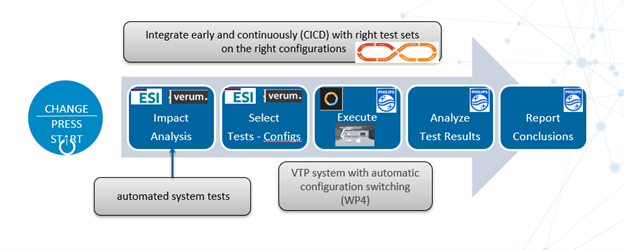

The figure above shows the process of testing a change to an application. First, the impact of this change on various software and hardware components should be assessed. Also, the affected system configurations in the platform should be identified to determine which test systems are required. Based on this information the appropriate test set for each affected configuration is selected, which should provide the confidence that all requirements are met and existing functionality is still intact. Next, the selected tests are executed on the selected configurations, failed tests are analyzed, and results are reported. Traditionally, all steps are (for a large part) performed manually. Particularly the impact analysis is a complicated endeavor, due to the many dependencies and interactions between various components of a complex system of systems like the Azurion platform. Doing this analysis manually requires several subject matter experts to assess the impact of a change, which costs a large amount of effort and is error-prone. Ideally, we would like to be able to press a “magic” button that automatically determines the impact of a change, selects the right tests and system configurations, and automatically executes them on the right test systems. In Vivaldy, impact analysis, selecting tests and configurations, and daily execution of tests have all been automated.

The change impact analysis of Verum aims to capture the knowledge of subject matter experts into models to determine the minimal but required set of tests to perform which cover both the modified code and the untouched code which may behave differently as a result of modifications elsewhere. The method is based on partitioning a system into separate functional module specifications as well as an overall functional specification. When the behavior of a modified module implementation complies with the corresponding unmodified module specification it cannot not affect other modules, otherwise it may. The compliance of a module implementation to its module specification is asserted through Model Based Testing (or Model Checking when implemented in Dezyne). The information at the module level is used to determine which behavior across all modules to excite in order to verify that the system complies with its overall functional specification.

The benefits of the Verum Dezyne approach are:

- Interfaces and modules act as change firewalls

- Formalizing the matter expert knowledge making it available for automated test selection

- System specifications are derived from the composition of module specifications by means of labeled transition state (LTS, model describing state behavior) stitching

- Or they may be written independently and verified through Model Checking

- Verification of existing implementations is supported by means of Model Based Testing

- The information contained in models are shared and exchanged amongst the product development trinity members: business, design & implementation and quality assurance.

The examples on this separate page demonstrate the different language features developed in the Vivaldy context according to the process depicted there.

The Vivaldy work is part of the Dezyne roadmap and is expected to become available according to the following release plan, see https://verum.com (commercial) and https://dezyne.org (technical) for updates:

- 2.17 constraining interfaces Q1

- 2.18 interface shared state Q1

- 2.19 LTS based testing and LTS stitching Q2

- 2.20 module Q3

In the Vivaldy project, TNO-ESI focused on the BDD (behavior-driven development) method, widely adopted by industry nowadays. Despite the rising popularity, there is growing recognition that adoption of BDD incurs significant maintenance costs and reduced team productivity in the long run. Next to that the creation of new test scenarios from requirements specifications is recognized as a manual and time consuming activity, yet achieving only limited requirements coverage. The problem is further aggravated by having to deal with a large variability space due to the many supported product configurations.

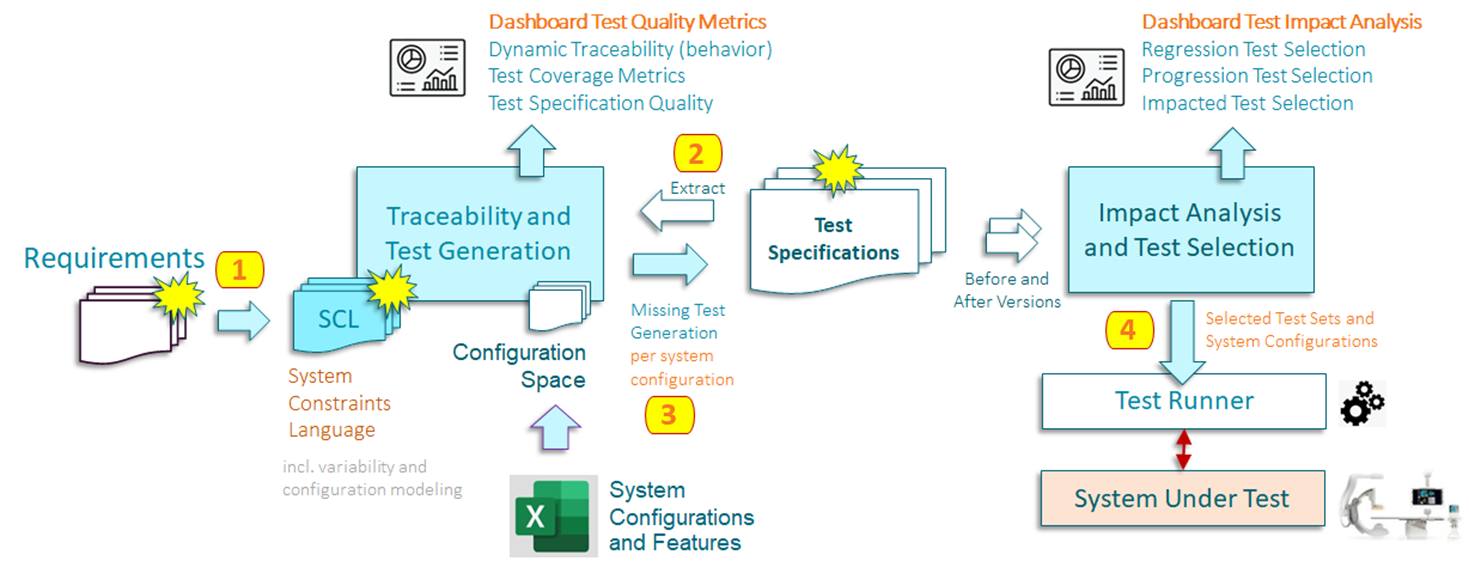

To address these concerns, TNO-ESI has developed a methodology for change impact analysis (based on requirements specifications) and test selection for software product lines. Techniques (impact analysis, test selection and generation) were developed to support this method are based on combining results from state-of-the-art process mining and model-based testing research. To support the application of our method in practice, we developed a proof-of-concept generic and extensible tooling framework called SpecDiff. The method is presented in the figure below and further explained in context of SpecDiff in the video that follows. Note that yellow clouds in the figures represent a change to indicated artefacts.

To address the lack of standards to capture the (behavioral) intent of requirements, we propose the systems constraints language (SCL) and investigated the conceptual relation to industry standards such as SysML. By formally capturing functional requirements in models, all efforts by the test teams can focus on ensuring that requirement specifications are as complete as possible. Based on such specifications, we show that it is possible to automatically evolve a test suite (generating new ones, indicating impacted ones) to reflect a change to requirements. Next to that we show that it is possible to automatically select only the relevant tests and system configurations to run them on, to test that the updated system conforms to the specification change.

The proposed innovations have been realized in a tool called SpecDiff with a particular focus on making it easier to adopt in practice by trying to link as closely as possible to technologies and way of working in industry.

A demo of the SpecDiff toolset can be seen here:

Execution of tests on various configurations requires each of those configurations to be available in test systems. Maintaining such a test park is very expensive, especially when realizing that switching between configurations takes more than a week (and several weeks if parts have to be ordered). In previous projects, a virtual test platform was developed to be able to run system tests without building up physical systems. The capabilities of this virtual test platform (see figure above) were extended to be able to simulate more different test situations and to automatically switch to a requested system configuration. As an example, the left side of the above figure displays a monoplane configuration of the Azurion. After automatically switching configurations, the virtual system has been changed into a bi-plane system (containing a second, lateral arc). By having the option to dynamically select system configurations, the need for expensive physical test systems becomes much smaller. See also the video.

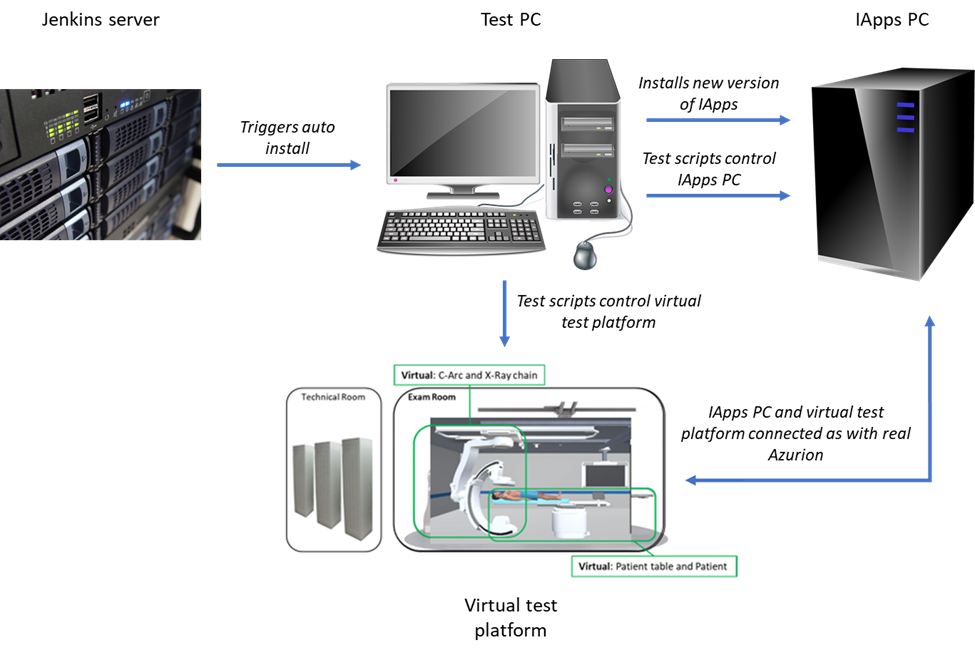

The figure above depicts the test setup that is used to test Interventional Applications with the virtual test platform. After installing the right version of the Interventional Applications software (if needed), a Jenkins server uses the input from the impact analysis and triggers the execution of the required test set via a test PC that is again connected to both the Interventional Applications PC and the virtual test platform. This makes it possible to execute the entire test set, based on the impact analysis, automatically. Being able to use this setup on a daily basis will significantly improve the efficiency of testing, and, by having more accurate test sets and more testing capabilities, improve the quality of the software.